What is Edge AI and What is Edge AI Used For?

Ever heard of Edge AI but do not know what it means or what is it used for?

No worries, as through this guide, you will learn all about Edge AI from:

- What is Edge AI?

- Advantages of using Edge AI

- What can Edge AI be used for?

- How to deploy Edge-based AI solutions?

- Examples of Edge devices to run AI

What is Edge AI

You have heard of AI (Artificial Intelligence) but what is Edge?

About Edge Computing

Edge computing is a distributed computing paradigm that brings computation and data storage closer to the devices where it’s being gathered. Compared to relying on a central location like a cloud, Edge Computing allows real-time data to not suffer bandwidth and latency issues which affect app performance.

To put in simpler terms, instead of running processes in the cloud, Edge Computing runs processes on local places like a computer, IoT device or Edge Server. By bringing computation to a network edge, long-distance communication between a client and server is now being reduced.

Combine it with AI = Edge AI

So, what does Edge AI means? Well, it basically means running AI algorithms locally on a hardware device using edge computing where the AI algorithms are based on the data that are created on the device without requiring any connection. This allows you to process data with the device in less than a few milliseconds which gives you real-time information.

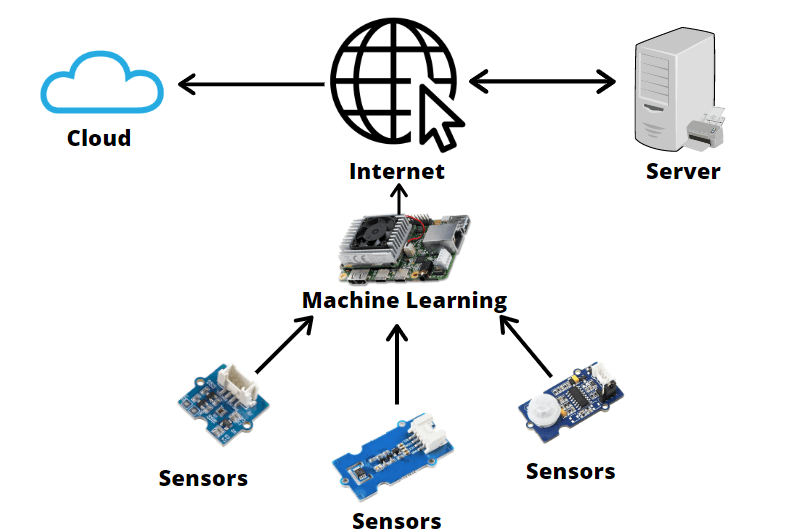

AI processing today is mostly done in a cloud-based data center with deep earning models that require heavy compute capacity. However, with Edge AI, AI processing is now moving part of the AI workflow to a device and keeping data constrained to a device like this:

With this kind of devices, data can be curated before sending it off to a remote location for further analysis. Together with the availability of various field sensor data, intelligent IoT management is now possible with AI at the edge. Inference can now happen at the edge and decreases the amount of network traffic flowing back to the cloud with the response time for IoT devices being cut to a minimum as well as management decisions will be available on-premise which is close to the devices which can bring many advantages.

As you can see above, IoT configurations used to be like this where sensors or devices are connected directly to the internet providing raw data to a backend server where machine learning algorithms are run. What about now?

As u can see above, what you are seeing now is Edge AI where machine learning algorithms are being run locally on a hardware device or embedded systems as compared to on servers.

Depending on the AI application and device category, there are various hardware option for performing AI edge processing like CPUs, GPUs, ASICs, FPGAs and SoC accelerators.

So now you know what is Edge AI, what advantage does it actually bring us?

Advantages of Edge AI

After briefly explaining what is Edge AI, you probably can already tell what benefits it can bring by moving AI processing to an Edge device. Edge AI has many advantages from:

Reduced Costs

With Edge AI, costs for data communication and bandwidth costs will be reduced as fewer data will be transmitted. Costs of performing AI processing in the cloud is much more expensive too due to the cost of AI device hardware. For edge AI, you can easily get a development kit like a Sipeed MAIX GO Suit for less than $50!

Security

When using AI in cases like security cameras, autonomous cars, drones, etc, data is a big concern for people. With Edge AI, as you are processing data locally, the problem can be avoided with streaming without uploading a lot of data to the cloud which makes you vulnerable from a privacy perspective. Processing time with Edge AI is very fast too, at a few milliseconds which reduces the risk of data getting tampered during transit drastically.

Furthermore, Edge AI devices can include enhanced security features as well, making it even more secure.

Highly Responsive

As you probably know, Edge AI devices are able to process data really fast compared to centralized IoT models. They allow real-time operations like data creation, decision, and action as insights are immediately processed within the same hardware making them suitable for use in applications where milliseconds matter like self-driving cars.

Easy to manage

Afraid that AI will be too complicated and hard for you to operate? No worries as Edge AI devices are self-contained which don’t require data scientists or AI developers to maintain. Data and insights are either automatically delivered to where they need to be or visible on the spot using highly graphical interfaces or dashboards.

What can Edge AI be used for?

Now you know what is Edge AI and its advantages, what can it actually be used for? Well, here are some real-life Edge AI applications which you can try!

Surveillance and Monitoring Purposes

Back when there is no edge AI, security cameras simply output a raw video signal and continuously stream that signal to a cloud server. This causes a big volume of video footage being transferred to the cloud which consumes a significant amount of benefit. All this causes a heavy load on the cloud server.

With Edge AI, machine learning-enabled smart cameras can now locally process captured images to identify and track multiple objects and people and detect suspicious activities directly on the edge. Camera footage will never have to travel to the cloud server except triggering events, reducing bandwidth use. This means the server can now easily communicate with a higher number of cameras reducing remote processing and memory requirements.

Autonomous Vehicles

As Edge AI immediately processes data within the same hardware, they allow for real-time operations allowing operations like autonomous vehicles possible. An autonomous vehicle requires data to be immediately processed like recognizing vehicles, traffic signs, pedestrians, road, etc to be able to operate safely. With Edge AI, it is able to identify all these information needed to the main controller and process them immediately.

Smart Speakers

Don’t these look familiar to you? These are your Google Home, Alexa, and Apple Homepod and they all are using Edge AI. Wake words and phrases such as “Alexa” have all been trained as a machine learning model and stored locally on the speaker. Whenever it hears the wake word, it will start listening to your requests and stream audio data to a remote server where it can process your full request.

Industrial IoT

When it comes to manufacturing, automating a factory for the future to be more efficient and effective definitely will require AI from visual inspection for defects to also robotic control for assembly. With Edge AI, you can deploy AI capabilities at a reduced cost that is also able to process data at a fast speed.

How to deploy Edge-based AI solutions?

Interest in Edge AI solutions? But there is a problem, how to? Well, getting to Edge AI based solutions is definitely not an easy task as there various steps involved from creating an analytics model, deploying the model to executing the model at the edge. Furthermore, you will have to collect data, prepare the data, select the algorithms, training the algorithms on a continuous basis, deploy/redeploying the models, etc. There is indeed a lot to be done!

Afraid that it will be difficult? No worries as there are plenty of tutorials available online for you where you can learn machine learning! For example when you get a Jetson Nano at only $89,

You can get a self-paced online course for beginners called Getting Started with AI on Jetson Nano where you will learn to collect image data and use it to train, optimize, and deploy AI models for custom tasks like recognizing hand gestures and image regression for locating a key point in an image for free!

In addition, if you want to create your own projects, Jetson Nano has useful tools like the Jetson GPIO Python library, and is compatible with common sensors and peripherals, including many from Raspberry Pi.

If you want to use a different Edge AI hardware, they also have tutorials available! Like the Coral Dev Board, they have tutorials for getting started as well!

As Edge AI is becoming the next big thing now, there are many tutorials readily available online, so don’t worry!

Examples of Edge devices to run AI

Want to get started with Edge AI? Here are a few of our best selling development boards that we feel will be perfect for you!

NVIDIA® Jetson AGX Orin™ Developer Kit

Coming in first, we have the Jetson AGX Orin Developer Kit! Jetson AGX Orin Developer Kit was just released on 2022 March 22, Orin is the world’s smallest, most powerful, and most energy-efficient AI computer.

- A Giant Leap Forward for Robotics and Edge AI: up to 275 TOPS and 8X the performance of NVIDIA® Jetson AGX Xavier™ in the same compact form-factor, power configurable between 15W and 50W

- New NVIDIA Ampere GPU and Carmel CPU: 2048 NVIDIA CUDA® cores and 64 Tensor Cores, 12-core Arm® Cortex®-A78AE v8.2 64-bit CPU

- Enable multiple concurrent AI applications: on-board 64GB eMMC, 204 GB/s of memory bandwidth, and 32 GB of DRAM

If you want to see more info here is the link: NVIDIA® Jetson AGX Orin™ Developer Kit

NVIDIA Jetson AGX Xavier Development Kit

With the NVIDIA Jetson AGX Xavier developer kit, you can easily create and deploy end-to-end AI robotics applications for manufacturing, delivery, retail, agriculture, and more.

Supported by NVIDIA JetPack and DeepStream SDKs, as well as CUDA®, cuDNN, and TensorRT software libraries, the kit provides all the tools you need to get started right away.

- More than 20X the performance and 10X the energy efficiency of the NVIDIA Jetson TX2

- Supported by NVIDIA JetPack and DeepStream SDKs, as well as CUDA®, cuDNN, and TensorRT software libraries

If you want to see more info here is the link: NVIDIA Jetson AGX Xavier Development Kit

NVIDIA® Jetson Nano™ Developer Kit

Coming in first, we have the Jetson Nano! If you are looking to do Edge AI but at the same time want to use the SBC as a desktop, The Jetson Nano from NVIDIA can do just that! In addition, this module is cost-effective and compact too!

- The NVIDIA® Jetson Nano™ Developer Kit delivers computing performance to run modern AI workloads at unprecedented size, power, and cost. Developers, learners, and makers can now run AI frameworks and models for applications like image classification, object detection, segmentation, and speech processing.

- The developer kit can be powered by micro-USB and comes with extensive I/Os, ranging from GPIO to CSI. This makes it simple for developers to connect a diverse set of new sensors to enable a variety of AI applications. It’s incredibly power-efficient, consuming as little as 5 watts.

- Jetson Nano is also supported by NVIDIA JetPack, which includes a board support package (BSP), Linux OS, NVIDIA CUDA®, cuDNN, and TensorRT™ software libraries for deep learning, computer vision, GPU computing, multimedia processing, and much more. The software is even available using an easy-to-flash SD card image, making it fast and easy to get started.

- The same JetPack SDK is used across the entire NVIDIA Jetson™ family of products and is fully compatible with NVIDIA’s world-leading AI platform for training and deploying AI software. This proven software stack reduces complexity and overall effort for developers.

- And of course, as mentioned above, there is an online course for you to get started on so don’t worry if you are a beginner to Edge AI.

By the way, you are in luck! Get a Jetson Nano now as we have just lowered the price of the NVIDIA Jetson Nano to only $89! (U.P $99)

Get the NVIDIA Jetson Nano to get started on your Machine Learning and AI journey now!

Specs:

| Specs | NVIDIA® Jetson Nano™ Developer Kit |

| CPU | Quad-core ARM® A57 CPU |

| GPU | 128-core NVIDIA Maxwell™ GPU |

| RAM | 4 GB 64-bit LPDDR4 |

| Price | $89 |

Do not want a whole developer kit and just want the module to design your product? We offer it too!

NVIDIA Jetson Nano Module

NVIDIA Jetson Nano Module is an SoM of Jetson Nano Development Kit. Basically, it can be used to assemble an SBC with an extension board to achieve graphic AI applications.

Interested in designing a carrier board for the Jetson Nano? You can download the full design files at Jetson Download Centre.

If you are looking for a more powerful computing power than the Jetson Nano, you can check out the next Edge AI device!

NVIDIA Jetson TX2 Developer Kit

Boasting a 1.3 TOPs computing power, the Jetson TX2 is much more powerful than the Jetson Nano.

NVIDIA Jetson TX2 Developer Kit contains an NVIDIA Jetson TX2 Module and many other accessories as a kit. It is mainly developed for AI and deep learning applications. It can give you a fast, easy way to develop software and hardware for the Jetson TX2 AI supercomputer on a module. Jetson TX2 is ideal for applications requiring high computational performance in a low power envelope.

NVIDIA Jetson TX2 Developer Kit supports JetPack SDK as well in the Linux system which includes the BSP, libraries for deep learning, computer vision, GPU computing, multimedia processing, and much more.

Specs:

| Specs | NVIDIA Jetson TX2 Developer Kit |

| CPU | Dual-Core NVIDIA Denver 2 64-bit processor and quad-core ARM Cortex-A57 MPCore unit |

| GPU | 256-core Pascal @ 1300MHz |

| RAM | 8GB 128-bit LPDDR4 @ 1866Mhz | 59.7 GB/s |

| Price | $399.00 |

Similar to the Jetson Nano, it also has an embeddable module also for your needs.

NVIDIA Jetson TX2 Module

Explore new AI capabilities at the edge with the NVIDIA Jetson TX2. This embedded computer lets you run neural networks with double the compute performance or double the power efficiency of Jetson TX1—at the same price.

As part of the world’s leading AI computing platform, Jetson TX2 Module works with NVIDIA’s rich set of AI tools and workflows, which enable developers to train and deploy neural networks quickly.

They come in 2 different memory options of 4GB at $299 and 8GB for $458.

NVIDIA Jetson TX2i Module

In addition to the standard TX2 (8 and 4 GB flavors), NVIDIA also created a hardened industrial version known as the TX2i. Compared to its standard cousin, the TX2i can withstand greater vibration, temperature and humidity ranges, and dust.

With a ten-year estimated lifespan, it lasts twice as long as the TX2. All of this means a higher, more “industrial” price tag of $789, and slightly elevated power requirements.

Other than Jetson Nano Edge AI devices, we also have plenty of Edge AI modules available

Sipeed Maixduino Kit for RISC-V AI + IoT

Want to start your AI journey at a low cost with an Arduino compatible interface? This kit will be perfect for you! Meet the Sipeed MAixduino, which is based on the MAIX Module.

- The Maixduino is a RISC-V 64 development board for AI + IoT applications. Different with other Sipeed MAIX dev. boards, Maixduino was designed in an Arduino Uno form factor, with ESP32 module on board together with MAIX AI module.

- MAIX is Sipeed’ s purpose-built product series designed to run AI at the edge. It moves AI models from the cloud down to devices on the edge of the network where they can run faster, at a lower cost, and with greater privacy

- This kit comes together with a OV2640 camera module and a 2.4 inch TFT display.

- The display is a slim 2.4 inch 240×320 TFT LCD display with a 24pin FPC interface with a high resolution & wide viewing angle make it a perfect option for your AI project such as face detection.

- The OV2640 camera is a 2 Megapixel OV2640 camera module with an f3.6mm lens. It contains a 24pin FPC interface. With a wide-angle lens and 1632 x 1232 high resolution make it a perfect camera for your AI tasks.

Specs:

| Specs | Sipeed Maixduino Kit for RISC-V AI + IoT |

| CPU | RISC-V Dual Core 64bit, with FPU; 400MHz neural network processor |

| GPU | QVGA@60FPS/VGA@30FPS image identification |

| RAM | SD Card |

| Price | $23.90 |

Interested in more Sipeed products? Here is one of our another best sellers!

Sipeed MAix BiT for RISC-V AI+IoT

- Built on the Sipeed MAIX module as well, the MAix BiT is a cost-effective Edge AI solution that is breadboard friendly!

- As you can see, Sipeed MAIX is quite like Google edge TPU, but it acts as a master controller, not an accelerator like edge TPU, so it is more low cost and low power than AP+edge TPU solution.

- As mentioned above, the MAix BiT is breadboard friendly which allows DIYer to build their own work!

- It is twice of M1 size, 1×2 inch size, breadboard-friendly, and also SMT-able,

- It integrates USB2UART chip, auto-download circuit, RGB LED, DVP Camera FPC connector(support small FPC camera and standard M12 camera), MCU LCD FPC connector(support our 2.4 inch QVGA LCD), TF card solt.

- MAix BiT is able to adjust core voltage! you can adjust from 0.8V~1.2V, overclock to 800MHz!

Specs

| Specs | Sipeed MAix BiT for RISC-V AI+IoT |

| CPU | RISC-V Dual Core 64bit, with FPU; 400MHz neural network processor |

| GPU | KPU (Neural Network Processor) 64 KPU, 576bit width, support convolution kernels, any form of activation function. It offers [email protected],400MHz, when overclock to 800MHz, |

| RAM | 8MB high-speed SRAM |

| Price | $12.90 |

Raspberry Pi 4 Computer Model B

Coming up next, we have the Raspberry Pi 4! If you a beginner looking to start learning electronics with an SBC (Single Board Computer), with one of the biggest communities and support for debugging, the Raspberry Pi 4 is highly recommended for beginners and has become a must-buy for all makers and tech enthusiasts! Of course, it can perform Edge AI as well.

- The Raspberry Pi 4 features impressive speeds and performance power compared to previous models while staying affordable and the same price as the previous Raspberry Pi Model 3B+ at $35.

- At its price, it features a Broadcom BCM2711, quad-core Cortex-A72 (ARM v8) 64-bit SoC @ 1.5GHz which has a Videocore VI Graphics Processing Unit (GPU) handling all graphical input/output. With it, it can cope with 4K resolution and H.265 video, as well as video scaling, camera input, and all HDMI and composite video outputs. The Broadcom BCM2711 also has ‘proper’ USB3.0 and Gigabit Ethernet interfaces!

- It also features a dual-band wireless LAN and Bluetooth have modular compliance certification, allowing the board to be designed into end products with significantly reduced compliance testing, improving both cost and time to market.

- Believe it or not, the Raspberry Pi 4 has desktop performance comparable to entry-level x86 PC systems and also capable of running machine learning and edge AI!

| Specs | Raspberry Pi 4 |

| CPU | Broadcom BCM2711, Quad coreCortex-A72 (ARM v8) 64-bit SoC 1.5GHz |

| GPU | Broadcom VideoCore VI |

| RAM | 1 GB, 2 GB, or 4 GB LPDDR4 SDRAM |

| Price | $35 – 1 GB RAM $45 – 2 GB RAM $55 – 4 GB RAM |

Coral Dev Board

Want a single-board computer for accelerated ML processing in a small form factor and an evaluation kit? This board has everything you need!

- Designed for use with the TensorFlow Lite neural network, it features an Edge TPU module engineered to deliver high-performance machine learning interpretation which allows users to quickly prototype on-device Machine Learning products.

- With one of the newest CPU, the NXP i.MX 8M SOC (Quad-core Cortex-A53, plus Cortex-M4F), combined with the Edge TPU, it offers incredible performance and power while being power efficient.

- You can use the Dev Board as a single-board computer for accelerated ML processing in a small form factor or as an evaluation kit for the SOM that’s on-board. The 40 mm × 48 mm SOM on the Dev Board is available at volume. It can be combined with your custom PCB hardware using board-to-board connectors for integration into products.

- The baseboard includes all the peripheral connections you need to prototype a project, including USB 2.0/3.0 ports, DSI display interface, CSI2 camera interface, Ethernet port, speaker terminals, and a 40-pin GPIO header.

- With the onboard Edge TPU, it can provides high-performance ML inferencing with a low power cost. For example, it can be able to execute state-of-the-art mobile vision models such as MobileNet v2 at 100+ fps, in a power-efficient manner.

| Specs | Coral Dev Board |

| CPU | NXP i.MX 8M SOC (quad Cortex-A53, Cortex-M4F) |

| GPU | Integrated GC7000 Lite Graphics |

| ML Accelerator | Google Edge TPU coprocessor |

| RAM | 1 GB LPDDR4 |

| Price | $149.99 |

Summary

Now, instead of telling computers what to do, computers are now taught how to learn. As AI and machine learning becomes more developed and advanced on the edge, new possibilities will be discovered. This includes growth and demand for IoT devices, 5G networks, AI smart devices, etc.

What do you thoughts about Edge AI and Edge Devices? Let us know in the comments down below!

Interested in more AI products? Here at Seeed, we offer over 40 AI-related products from development boards, modules to also robot car kits! Check out our bazaar to find out more!