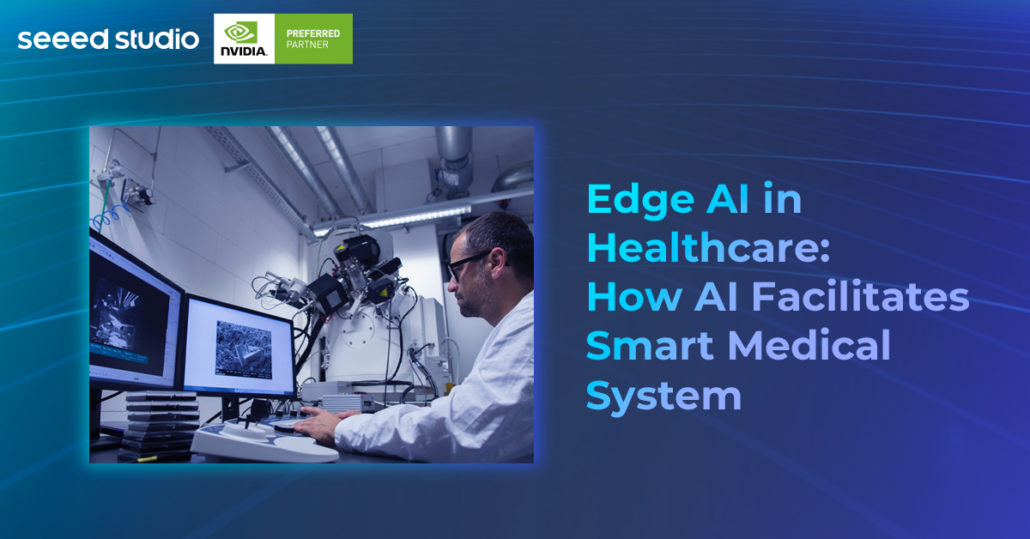

Edge AI in Healthcare Enables Smart Medical Industry with Digital Diagnosis and Remote Patient Monitoring

Digital transformation is essential to any traditional industry’s revolution to adopt new technologies to enhance expertise and cost down operations. AI helps across different roles in the healthcare of doctors, laboratories, researchers, caregivers, and patients.

Traditional Hospital Operation vs. AI-powered Smart Medical System

Traditional medical devices usually came with limited functions and were applied in general application areas, the absence of key technologies usually left doctors feeling powerless to respond to patients’ conditions. Even when we involve some new tech in the medical system, such as remote patient monitoring, it was still not so convenient for caregivers to monitor the situation of patients all the time.

Benefits of Edge AI

Low-latency, high-bandwidth data transmission capabilities and the ability to process massive amounts of data, which can help healthcare teams make critical and urgent decisions for patients. You can learn more information about what is Edge AI and how to implement it with embedded hardware in this article.

AI-driven tools can provide important support in helping clinicians perform accurate detection and faster abnormal judgment, help professionals diagnose with greater accuracy, improve the quality of pathological image recognition, and optimize workflow in the hospital, no matter medical documentation work or the overall turn-around time for the whole healthcare team.

Remote patient monitoring

Imagine there is a patient who needs sufficient care support and safe assistance within a short time. A trained AI model that detects when a patient has shifted from a safe state to a predicted boundary of an unsafe state can alert a caregiver or nurse to immediate attention.

The whole system is driven by a small computer combined with the camera to capture patient behavior continuously through video streaming so that the edge AI model can get the proper data to be trained and make the prediction. If the labeled event has been triggered, the medical team will receive the notice immediately.

Here are the benefits of the AI-driven medical device :

- Avoid the chance of human negligence while monitoring, such as more precise recognition of patient falling/ patient’s unexpected status, make a clear distinction among patient’s behavior;

- Eliminating the need for 24/7 manual monitoring, reducing manpower input;

- Only identifying the falling pose does not address the underlying problem, it is more important to figure out why this kind of dangerous status would happen by edge AI training algorithms, such as why the patient will fall. Is it because walker assistance isn’t close enough to the bed? or is it because of the small bed area?

Get the most out of the computer vision

Pose Estimation

alwaysAI supports computer vision models in easy steps and also supports running inference at the NVIDIA Jetson at the edge. It provides a catalog of pre-trained models, a low-code model training toolkit, and a powerful set of APIs to help developers at all levels build and customize computer vision applications.

You can easily use artificial intelligence to track and detect specific types of objects, people, and fall events, train CV models to track patients’ movements or body poses, and also make a prediction when a fall event occurs and send out an alert to caregivers based on established criteria.

Object Detection

Roboflow powers end-to-end CV solutions for top-tier healthcare companies by creating a healthcare problem-solving computer vision model step-by-step. All you need to do is, first of all, detect and classify objects from your specific scenario using computer vision methods, then label all of the data that you need from images of ultrasounds, x-rays, endoscopy, thermography, MRIs, and security feeds, and finally upload them on Roboflow to implement them in a medical setting, which provides much more accuracy. Roboflow hosts dozens of public datasets including BCCD, an object detection dataset containing images of blood cells. Deploying custom model to Jetson device, check out our wiki tutorial about how to fast annotate using Roboflow, train custom YOLOv5 model, and inference at the edge!

Other approaches for designing a smart monitoring system:

- Hidden Markov Model:

- Identify the patient’s status – standing/lying down/sitting/walking/falling, and distinguish between falling and lying down; it can also judge the likelihood of falling, which is predicted based on the previous state of the body

- TensorRT:

- Tracking semantic key points to build a holistic perception framework with the open-source library OpenPifPaf or TensorRT. Interested to learn more about NVIDIA TensorRT? Check out our blog Learn about how to supercharge your inference with TensorRT and YOLOv5.

- Background subtraction algorithms and postural sway analysis:

- Identify the patient’s state through the swing amplitude, and in the meanwhile, minimize the interference of the environment on the important image information which needs to be recognized.

Digital Pathology Diagnosis

Traditional pathological image processing is carried out manually. Pathologists used the naked eye to analyze the specific stained pathological images under the microscope, completed the identification and counting of different types of cells, and calculated the positive rate.

You might have some doubts about this approach:

- The diagnosis results are subjective, different from person to person, and have poor repeatability;

- Low efficiency for Pathologists to complete the whole process;

- Observations are often derived from estimates, and the proportions are not accurate enough.

- Pathological results are stored in the form of slides, which is inconvenient for viewing, storing, and acquiring pathological results.

Applying deep learning to the assistant diagnosis based on digital pathological images can not only improve the efficiency and quantitative accuracy of disease diagnosis, target detection, and region of interest segmentation. It can also eliminate the constraints imposed by economic conditions, geographical environment, and medical infrastructure, in the meanwhile, making full use of digital pathology images can significantly reduce costs.

In digital pathology, edge AI-based methods can be applied to the detection and segmentation of ROI regions and high-level pattern prediction of disease diagnosis.

How does AI-powered diagnosis work?

Edge AI provides automatic WSIs(Whole slide imaging) for image analysis through the method of machine learning. The diagnostic flow of AI in pathology is as follows: Slides of pathological slides are captured through a computer scanner into full-slide digital images. With the support of the WSIs system, full-slide imaging provides users with an opportunity to expand their toolset, including digital annotation, rapid navigation/zooming, and computer-aided viewing and analysis.

To improve the maximum available image data set with the minimum image annotation information, weakly supervised learning methods are usually adopted to give full play to the ability of machine learning to build more accurate models.

In addition, in WIS, the pixel size of the image is up to gigapixels, which occupies the GPU memory excessively and makes it difficult to analyze. At present, the general level of computers cannot meet the standard of full-pixel processing WSIs, which will lead to a significant decline in recognition accuracy.

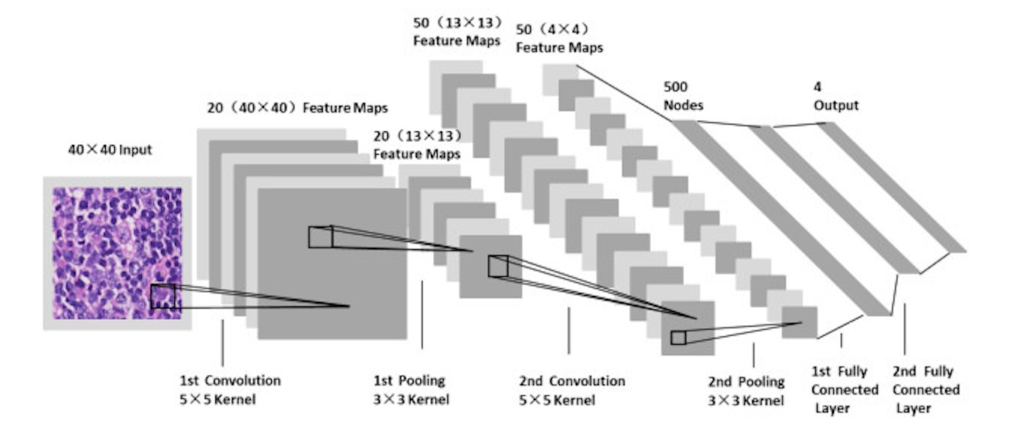

Therefore, the sliding window detection classification model composed of the CNN algorithm and grid-based attention network can divide an image into local block map (patch) area, analyze the information of each block and extract features, etc., and obtain results in aggregating information. This approach is efficient in reducing memory usage and improving model efficiency.

The processing flow of convolutional neural network for detecting visual categories in images:

At GTC 2021, Grundium Ltd showed how whole-slide scanners for pathology can be rethought by utilizing the computational capability of the NVIDIA Jetson platform. Deep learning-based image analysis can be interleaved with the scanning process. Therefore, the results can be ready when the scan is complete, increasing the diagnosis throughput.

From NVIDIA’s blog AI at the Point of Care: Startup’s Portable Scanner Diagnoses Brain Stroke in Minutes, there is a lightweight brain-scanning device powered by NVIDIA Jetson AGX Xavier offering 32 32 TeraOps AI performance with energy-efficient inference at the edge. The intelligent embedded systems figure out how the brain signals interacted with and identified a further diagnosis.

The upcoming module Jetson Orin Nano is also considered for the next generation. We just announced reComputer of Orin NX and Orin Nano. Let us know your thoughts!

You can also use MathWorks GPU Coder to deploy the prediction pipeline on NVIDIA Jetson.

The solution goal is to train a classifier to distinguish between Arrhythmia (ARR), Congestive Heart Failure (CHF), and Normal Sinus Rhythm (NSR). This tutorial uses ECG data obtained from three groups or classes:

- People with cardiac arrhythmia

- People with congestive heart failure

- People with normal sinus rhythms

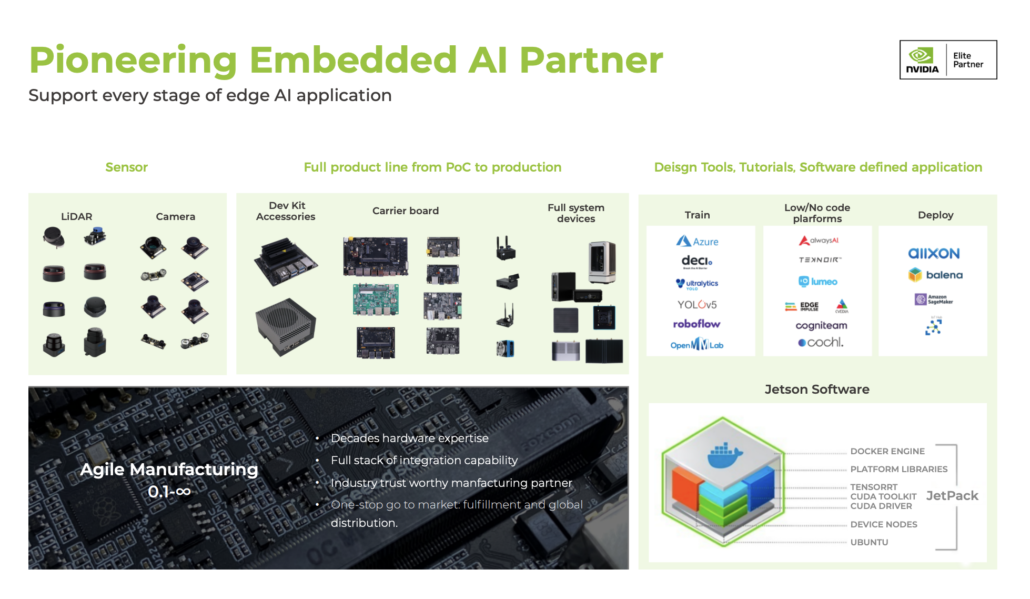

Seeed NVIDIA Jetson Ecosystem

Seeed is an Elite partner for edge AI in the NVIDIA Partner Network. Explore more carrier boards, full system devices, customization services, use cases, and developer tools on Seeed’s NVIDIA Jetson ecosystem page.

Join the forefront of AI innovation with us! Harness the power of cutting-edge hardware and technology to revolutionize the deployment of machine learning in the real world across industries. Be a part of our mission to provide developers and enterprises with the best ML solutions available. Check out our successful case study catalog to discover more edge AI possibilities!

Take the first step and send us an email at [email protected] to become a part of this exciting journey!

Download our latest Jetson Catalog to find one option that suits you well. If you can’t find the off-the-shelf Jetson hardware solution for your needs, please check out our customization services, and submit a new product inquiry to us at [email protected] for evaluation.