Introduction

Object detection is a critical field in computer vision, encompassing tasks like identifying objects in images. This has practical applications in various sectors like surveillance, healthcare, and self-driving cars. Single-Stage Object Detectors are a subset of object detection models that directly predict object classes and bounding box coordinates without the need for an initial region proposal stage. This streamlines the process and is especially useful for real-time applications. One prominent example is the YOLO (You Only Look Once) family of models, known for their efficient single-stage approach to object detection, making them suitable for scenarios where speed is crucial without sacrificing accuracy.

YOLO

Revolutionizing the object detection domain, YOLO (You Only Look Once) marked a breakthrough by introducing the pioneering concept of treating object detection as a regression task within a single stage. This innovative approach involved a detection architecture that scrutinized the image just once to forecast both object positions and their corresponding class designations.

YOLOv5

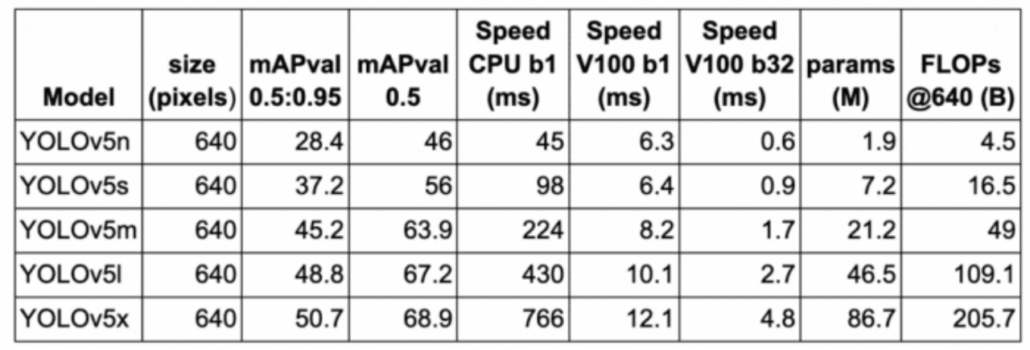

The YOLOv5 series introduces a range of object detection architectures that come pre-trained on the MS COCO dataset. Following its launch, EfficientDet and YOLOv4 were subsequently introduced. Presently, YOLOv5 stands as an official state-of-the-art model, benefiting from extensive support and enhanced usability in production scenarios.

The notable aspect of YOLOv5 is its native implementation in PyTorch, which addresses the constraints of the Darknet framework. Darknet, built in C programming language, lacks a production-oriented perspective. While Darknet is valuable for research purposes, it has a smaller community and limited support.

The transition of YOLO to PyTorch simplifies architecture modification and enables seamless deployment across various environments. Additionally, YOLOv5 holds a place in the Torch Hub showcase as an official state-of-the-art model. This version’s PyTorch implementation eliminates the limitations posed by the Darknet framework, optimizing its applicability for production contexts.

YOLOv5n

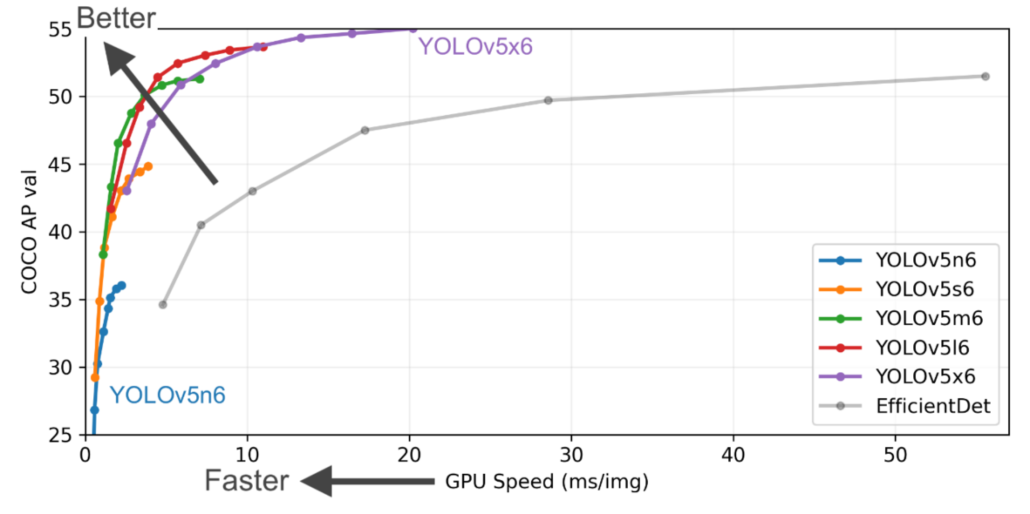

The YOLOv5n and YOLOv5n6 models, known as Nano models, exhibit a substantial reduction of approximately 75% in parameters compared to their predecessors. The parameter count drops from 7.5 million to 1.9 million, rendering them suitable for deployment on mobile devices and CPUs. The visual representation also highlights that YOLOv5 exhibits notably superior performance compared to various versions of EfficientDet.

Notably, even the smallest iteration of YOLOv5, namely YOLOv5n6, achieves a comparable level of accuracy, surpassing EfficientDet in terms of speed. This observation underscores YOLOv5’s efficiency in delivering accurate results swiftly, making it a favorable choice, particularly in scenarios where performance and rapid processing are key considerations.

Demo

Before deploying a model on resource-constrained devices like Raspberry Pi, it’s often essential to perform model conversion and quantization to ensure optimal performance. This involves several steps to convert a PyTorch model (in .pt format) to a TensorFlow Lite (TFLite) model with quantization, specifically to uint8 data type.

Certainly, it’s worth noting that you have the flexibility to employ your custom dataset for training purposes. The reTerminal DM, powered by Raspberry Pi CM4, designed primarily for industrial applications, is adept at showcasing its capabilities through a practical demonstration. In this particular demonstration, the focus is on exemplifying object detection within the construction domain, specifically highlighting its efficacy in identifying Personal Protective Equipment (PPE) kits—a critical aspect of safety in this industry.

You can visit those reference sites to clone the model into your reTerminal and play around.

- https://github.com/KasunThushara/yoloV5n_RPI

- https://github.com/ultralytics/yolov5

Conclusion

In conclusion, the integration of cutting-edge object detection capabilities within reTerminal DM represents a pivotal advancement for a multitude of industrial applications. By harnessing the power of YOLOv5n’s precise and real-time object detection, this adaptable device redefines how industries manage operations, ensuring heightened efficiency, safety, and productivity. Whether it’s enhancing quality control, safeguarding workers, optimizing asset management, or revolutionizing predictive maintenance, the fusion of reTerminal DM’s innovative features with robust object detection paves the way for intelligent, integrated, and efficient approaches to industrial device management and data flow. The possibilities are vast, and this collaboration promises to propel industries into a new era of smart and proactive decision-making, where every object and asset can be monitored and utilized with unprecedented accuracy and insight.

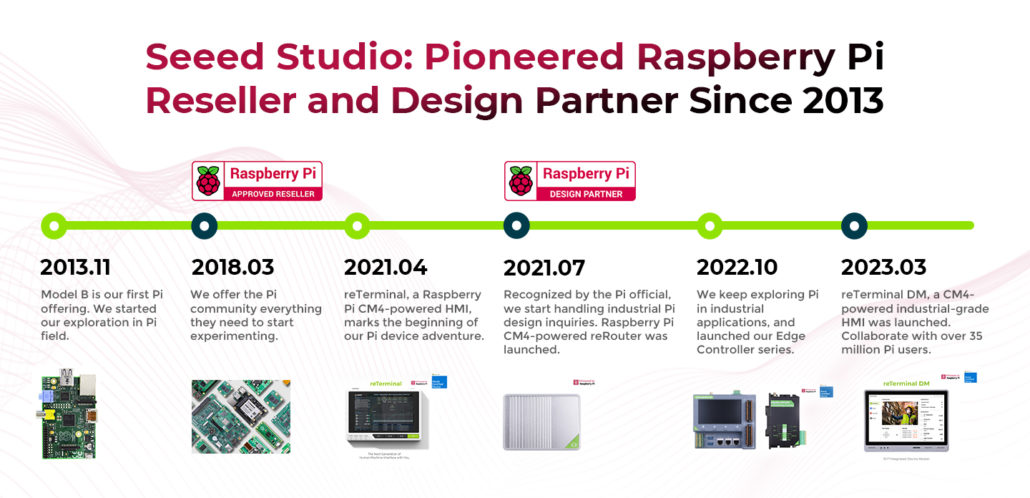

Seeed Studio's Raspberry Pi Ecosystem

Seeed Studio has been serving the Raspberry Pi user community since 2013 and took the lead to join the approved reseller and design partner. Since the first version of reTerminal in 2021, we have a series of products including reRouter, edge controller series, and this year reTerminal DM, serving creators, makers, enthusiasts, students, engineers, enterprises as well as industries, and every scenario needing Raspberry Pi.

More Resources

- Explore more products, full system devices, customization services, and use cases on the Seeed Raspberry Pi page.

- Download our latest Raspberry Pi success case booklet to know how Seeed and Seeed’s Raspberry Pi-powered products and solutions assist in tackling real-world challenges. If you need any customization based on Raspberry Pi, please check out our customization services.

- Download our latest product catalog to find the ones that suit your needs.

- Wants to discuss more customization possibilities, please check out our customization services, and submit your inquiry to [email protected] to have a deeper discussion and evaluation for you.