Build Connected Robots with NVIDIA Isaac and ROS2

ROS is a set of open-source software libraries and tools that help you build robot applications. Here, professionals and hobbyists can collaborate and share their code for software development. Engineers can reuse blocks quickly and easily find all the tools necessary to build a fully functional robotic device. You must have noticed that there are ROS1 and ROS2 out there and it’s quite a hard choice to decide which one I should pick at the start or when I should switch to another one. Now, let’s jump into the article to find out what ROS2 is and why we should be excited about it compared to the previous version.

What is ROS2?

ROS2, built from the ground up to make it ready for commercial use, is revamped from the robot operating system’s existing framework and optimized for industrial use, developed to be scaled in new applications such as mobile robotics, drone swarms, and self-driving cars.

First, we need to learn some basic features of ROS2:

DDS: data distribution service, a communication pipeline that can interface with all the code.

Nodes: executed code files that utilize ROS2 functionality. The individual communication pipeline between nodes is called a Topic.

Nodes have three primary ways in which they can communicate with each other.

- through the Publisher/Subscriber method: one node publishes information referred to as a massage. This architecture is scalable such that one publisher can send messages to multiple subscribers

- through the services method: a service client node sends a request to another node which is responsible for completing the request, this is called a service server. After completing the request, the service server will send back a response.

- through the actions method: one node sets a goal for another node as the action server, then the action server will process the goal and send progress updates to the client. The progress updates are referred to as Feedback. The action server will continue sending feedback to the client until the goal is reached. And finally, the action server will send the Results of the action back to prove that the goal has been reached.

Node Parameter: allows for configurations for specific variable values with node rather than editing the code and recompiling the project repeatedly.

Why ROS2’s Coming is Important

ROS1 was initially built and released by Willow Garage in 2007 to accelerate robotics research. However, it was not made for commercial use but to create a research tool, so issues such as security, network topology, and system uptime were not a priority. As ROS is now adopted in the commercial world, many of its major drawbacks are becoming apparent. Therefore, it’s more and more necessary to rebuild ROS from scratch for commercial use, which turns out to be ROS2. In response to commercial use requirements, the following provisions have been added:

- Security — It needs to be safe with proper encryption where needed

- Embedded Systems — ROS2 needs to be able to run on embedded systems

- Diverse networks — It needs to be able to run and communicate across vast networks since robots from LAN to multi-satellite hops to accommodate the variety of environments where robots could operate and need to communicate.

- Real-time computing — Need to be able to perform computation in real-time reliably since runtime efficiency is crucial in robotics

- Product Readiness — Need to conform to relevant safety / industrial standards such that it ready for market

Changes between ROS1 and ROS2

- Using DDS as the network protocol for all the communication within ROS2 increases the security and reliability guarantee compared with ROS1

- ROS2 eliminates ROS1’s single point of failure (ROS master) and increases the fault tolerance of the system

- In ROS1, the ROS master acts as an intermediary to identify two nodes that need to be connected and then create a direct connection for them. However, when the ROS master fails, these two nodes can only continue to communicate with each other as isolated nodes and cannot communicate with new nodes

- ROS2 performs better than ROS1 on weak or lossy networks, such as Wifi or satellite connections

- ROS1 performs well on reliable networks because it is built with adequate TCP protocols, but TCP/IP fails to provide reliable performance on unreliable networks due to data retransmission. However, ROS2 uses DDS communication without considering the network condition

- The ROS2 client library shares a typical underlying implementation – which is rcl

- Compared to ROS1 each client library (such as roscpp or rospy) is written entirely in its own language, ROS2 wraps the common rcl implementation, which generally provides more consistent performance across languages. This also simplifies the developer’s ability to create new client libraries for new languages.

Get a List to figure out why should be excited about ROS2

- Modern API, minimal dependencies, and better portability

- Benefits of DDS RMW(ROS middleware):

- reliability QoS settings

- UDP multicast, shared memory, TLS over TCP/IP

- real-time capable

- master-less discovery

- Easier to work with multiple nodes in one process

- More dynamic run-time features like topic remapping and aliasing

- Dynamic parameters

- Synchronous, scheduled execution of nodes

- More efficient package resource management

How to Deploy & Where to Get Started

NVIDIA Jetson integrates DeepStream SDK and ROS

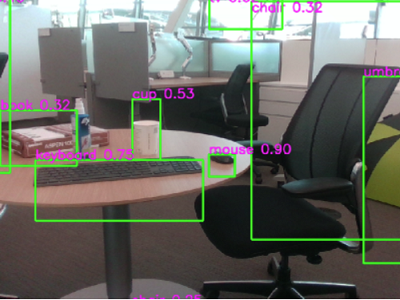

NVIDIA uses the existing framework for deep learning model deployment, combines TensorRT to improve model reasoning performance, and deploys various AI models for classification and object detection, including ResNet18, MobileNetV1 / V2, SSD, YOLO, FasterRCNN.

For ISAAC ROS encoders and decoder nodes, you can use the YOLOv5 model like model_file_path or engine_file_path to publish inference output as tensors of type isaac_ros_tensor_list_interfaces/TensorList to decoder node. Then we subscribe to tensors from TensorRT/Triton node, parse tensors into specific parameters, and finally publish results as a Detection2DArray message for each image

For distance estimation to obstacles, there is a great model that is efficient semi-supervised(ESS), a visual depth estimation DNN that estimates disparity for a stereo image pair and returns a continuous disparity map for the given image. Combing this with ROS2, you may refer to the packages as isaac_ros_stereo_image_proc, isaac_ros_ess, and isaac_ros_bi3d.

For human posture estimation, the pre-training model inferred 17 body parts based on the categories in the COCO dataset. You can use the ros2_trt_pose package here to label your images and make them visualize. Then you get a real-time image output with body joints and bones.

For building end-to-end AI-based solutions using multi-sensor processing, video, and image understanding, NVIDIA provides ros2 _ deepstream nodes that perform two inference tasks according to the DeepStream Python Apps project: Object detection and attribute classification. Each inference task also generates a visual window with a bounding box and label around the detected object.

You can also learn more from NV’s upcoming Isaac ROS webinar about how to estimate obstacle distances with stereo cameras using bespoke, pre-trained DNN models. Register here and check it out on Jan 17, 2023.

Get started with ROS

You can get started from HUMBLE HAWKSBILL, the recommended guide showing concepts, tutorials, installation steps, and so many exciting projects. As for ROS2, it supports Gazebo Fortress, which is the ROS-based robot simulator that includes more than a dozen out-of-the-box segmentation cameras, sensors such as GPS, a 3D view graphical interface, and more. Moreover, Movelt HUMBLE injects energy into the development of the robotic arms manipulation system, especially for the Hybrid programming function: The use of a (slower) global motion planner and a (faster) local motion planner enables the robot to solve different tasks in online and dynamic environments.

Manage ROS2 Development Cycle by Nimbus

To better manage your ROS2 development cycle and get easy steps in the robot integration process, you can also use Cogniteam Nimbus to pay more attention to software development. Nimbus uses containerized applications as software components, organized, wired, and reassembled from the Web through code, a console interface, or using a GUI so that anyone, even without ROS-specific knowledge, can understand and view the various building blocks of robotic execution that makes up these components. It also allows the use of various ROS distributions (including ROS1 and ROS2 components) on the same robot to solve problematic coupling issues between OS and ROS versions.

Start ROS Development with Seeed Jetson Products

reComputer J2021: real-world AI at the Edge

Built with Jetson Xavier NX 8GB

J2021 is a hand-size edge AI box built with Jetson Xavier NX 8GB module which delivers up to 21 TOPs AI performance, a rich set of IOs including USB 3.1 ports(4x), M.2 key E for WIFI, M.2 Key M for SSD, RTC, CAN, Raspberry Pi GPIO 40-pin, and so on, aluminum case, cooling fan, pre-installed JetPack System, as NVIDIA Jetson Xavier NX Dev Kit alternative, ready for your next AI application development and deployment. It is ideal for building autonomous applications and complex AI tasks of image recognition, object detection, pose estimation, semantic segmentation, video processing, and many more.

Carrier Board for Jetson Nano/Xavier NX/TX2 NX

The reComputer J202 carrier board has nearly the same design and function as NVIDIA® Jetson Xavier NX™ carrier board, perfectly works with Jetson Nano/Xavier NX/TX2 NX module, and consists of USB 3.1 ports(4x), M.2 key E for WIFI, M.2 Key M for SSD, RTC, CAN, Raspberry Pi GPIO 40-pin, and so on, accelerating your next AI application development and deployment. With its multiple camera connectors, it is suitable for running multiple neural networks in parallel for applications like image classification, object detection, segmentation, and speech processing.

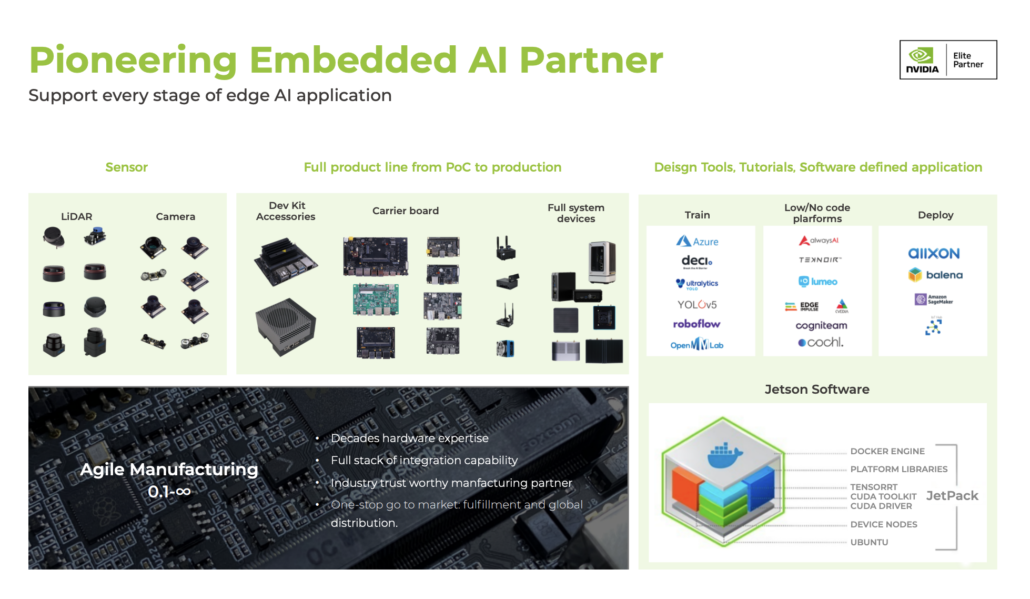

Seeed NVIDIA Jetson Ecosystem

Seeed is an Elite partner for edge AI in the NVIDIA Partner Network. Explore more carrier boards, full system devices, customization services, use cases, and developer tools on Seeed’s NVIDIA Jetson ecosystem page.

Join the forefront of AI innovation with us! Harness the power of cutting-edge hardware and technology to revolutionize the deployment of machine learning in the real world across industries. Be a part of our mission to provide developers and enterprises with the best ML solutions available. Check out our successful case study catalog to discover more edge AI possibilities!

Take the first step and send us an email at [email protected] to become a part of this exciting journey!

Download our latest Jetson Catalog to find one option that suits you well. If you can’t find the off-the-shelf Jetson hardware solution for your needs, please check out our customization services, and submit a new product inquiry to us at [email protected] for evaluation.